Additionality is one of the most important principles behind carbon markets. In simple terms, a carbon project is considered “additional” only if the emissions reduction would not have happened without carbon finance or market incentives.

This distinction is becoming increasingly important in India as the Carbon Credit Trading Scheme (CCTS) begins shifting sustainability from voluntary reporting toward measurable market performance.

For example, if a renewable energy project in India is already financially viable because of falling solar costs, state subsidies, or existing policy support, then issuing carbon credits for the same activity may not represent a real environmental gain. The project would likely have happened even without carbon market participation.

Under a high-integrity carbon market, credits should only be issued for activities that genuinely create reductions beyond the business-as-usual scenario. Otherwise, the system risks rewarding projects for outcomes that were already economically inevitable.

This is why additionality has become a central concern for regulators, buyers, and verification platforms. In the emerging Indian carbon economy, proving impact is no longer about narratives or sustainability reports it requires evidence, measurable baselines, and continuous verification.

Every carbon credit ever issued rests on one claim: this emission reduction would not have happened without us.

That claim is called additionality. And it is the most gamed, most misunderstood, and most consequential concept in carbon markets.

When additionality is assessed correctly, carbon finance flows to projects that genuinely need it reforestation in remote degraded land, clean cookstoves in off-grid communities, avoided deforestation in high-pressure zones. When it's assessed poorly, the market issues credits for things that would have happened anyway solar farms that were already profitable, forests that were never under threat, industrial efficiency upgrades that regulations already required.

This guide explains additionality clearly: what the three tests actually measure, where each one fails, and how satellite-based verification is replacing narrative-based assessment with empirical proof.

What Additionality Actually Means

The definition is simple: a project is additional if it would not have happened without carbon finance.

In practice, this means:

- A reforestation project that loses money without carbon revenue → additional

- A solar farm that's already commercially viable → not additional

- A forest protected because of carbon contracts → additional

- A forest that was never going to be cleared anyway → not additional

Non-additional credits are a serious problem not just for buyers who overpay, but for the atmosphere. A credit issued for a non-additional project represents zero real emission reduction. It is phantom carbon accounting.

In 2023, The Guardian and independent researchers analyzed over 90% of Verra's rainforest offset credits and found that fewer than 10% represented genuine carbon reductions. The primary reason was failed additionality: forests classified as 'at risk' were actually under minimal deforestation pressure. Carbon finance flowed to projects protecting forests that were never in danger generating hundreds of millions of phantom credits that corporate buyers used to offset real emissions.

"Additionality is not a static property of a project. It is a time-sensitive economic condition. What was additional in 2018 is likely common practice in 2026."

— Sylithe Research

The Three Additionality Tests And Where Each Fails

Test 1: Financial Additionality

The financial test asks: is this project profitable without carbon revenue?

If a developer's own financial model shows the project hits their required return without any carbon credit income, it fails the financial test. Carbon finance must be the variable that turns a loss-making or marginal project into a viable one.

Where it fails: developers control their own financial models. IRR assumptions, discount rates, and cost estimates can all be adjusted to make a profitable project appear marginal. Consider a concrete case: a wind energy project in a region where wind power has already achieved grid parity meaning it costs the same or less than coal without subsidies. If a developer submits a financial model showing the project 'needs' carbon revenue to be viable, but local utilities are building identical wind farms without carbon contracts, the common practice test immediately flags the additionality claim. The financial model is being manipulated to justify credits for a project that would have been built regardless.

Test 2: Barrier Analysis

Some projects are financially attractive but blocked by real obstacles no local technical expertise, regulatory uncertainty, political risk, lack of supply chains. The barrier test asks whether carbon finance specifically helped overcome those obstacles.

Where it fails: barriers are easy to describe in a project document. 'Limited local capacity' and 'institutional barriers' appear in thousands of project documents without concrete evidence that those barriers existed or that carbon finance resolved them.

Test 3: Common Practice Analysis

This is the most objective test. It asks: are projects like this already happening in this region without carbon finance?

If solar farms are being built across a region driven by falling costs and government subsidies, a new solar project in that region is following common practice not creating additional impact. The common practice test acts as a sanity check on the financial and barrier claims.

Where it fails: 'region' and 'similar projects' are poorly defined in most methodologies. Developers can select narrow comparison groups that make their project look like an outlier when it isn't.

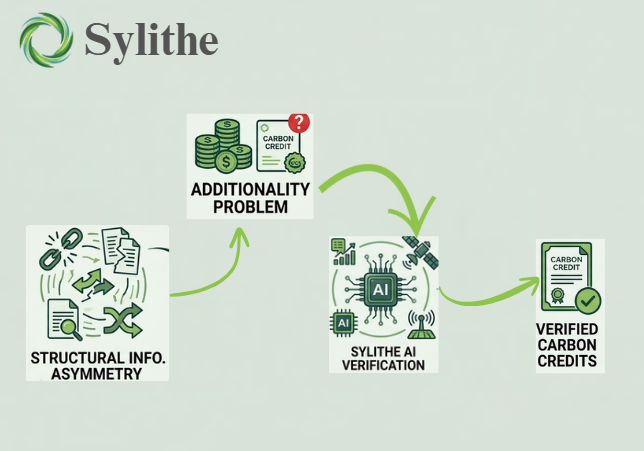

The core problem

All three tests share the same weakness: they rely on information the developer controls. Financial models, barrier descriptions, and comparison regions are all chosen by the entity with the most to gain from a positive additionality finding.

Why Additionality Failures Are Systematic, Not Accidental

The additionality problem isn't caused by bad actors. It's caused by bad incentives built into the system.

- Developers earn more revenue with more credits financial incentive to maximize additionality claims

- Auditors are paid by developers structural conflict of interest in validation decisions

- Methodologies update slowly what was additional five years ago may be standard practice today, but old methodologies keep issuing credits

- Buyers historically chose lowest-price credits creating market pressure against rigorous standards

The result is a market where additionality is frequently narrated rather than demonstrated. Projects pass because their documentation is well-written, not because their impact is real.

How Sylithe Proves Additionality Empirically

Sylithe's approach replaces developer narratives with satellite-observed evidence.

Landscape-Scale Common Practice Assessment

Instead of relying on a developer's chosen comparison region, our AI models scan the entire surrounding landscape to establish what is actually happening without carbon finance. If reforestation is occurring widely in a region driven by government subsidies, our system detects that pattern and flags it objectively establishing whether a new project is genuinely additional or following the trend.

Control Area Observation

Our Dynamic Control Area Baseline model continuously monitors statistically matched unprotected areas surrounding a project. If those control areas show deforestation or degradation while the project area remains intact, the difference is direct empirical evidence of additionality the project is preventing outcomes that are actively occurring nearby.

In a project assessment we conducted across a degraded forest landscape in Madhya Pradesh, our model identified 18 statistically matched control areas in the surrounding region. Over a 4-year observation period, control areas experienced an average 12.3% canopy cover loss driven by agricultural encroachment and charcoal production. The project area, which had carbon contracts in place and active community monitoring, showed 0.8% canopy loss over the same period. That 11.5 percentage point difference is empirical additionality not a narrative, not a financial model, but an observed outcome.

Temporal Monitoring of Additionality

Additionality is not permanent. As technology costs fall and regulations change, projects that were additional five years ago may no longer qualify. Our continuous monitoring pipeline re-evaluates additionality conditions annually ensuring that credits are only issued for periods when the project is genuinely making a difference that wouldn't happen otherwise.

What Buyers Should Demand

If you are purchasing carbon credits, additionality is the first question to ask. Here is what separates credible claims from weak ones:

- Independent financial validation not just the developer's own model, but third-party review of cost and revenue assumptions

- Satellite-verified control areas observable evidence that similar unprotected areas are experiencing the degradation the project claims to be preventing

- Dynamic common practice analysis ongoing assessment of whether the project type is becoming standard practice in the region

- Transparent methodology full documentation of how additionality was assessed, with data sources accessible for independent review

The buyer's test

Ask your credit provider: 'What would have happened to this project without carbon finance?' If the answer is a narrative rather than a data-backed financial analysis and satellite-verified control area comparison, the additionality claim is not yet credible.

Additionality is the bridge between financial value and atmospheric impact. If that bridge is built on narratives instead of data, every credit on it is at risk.

How Sylithe approaches additionality

Our verification platform combines control area monitoring, landscape-scale common practice assessment, and continuous temporal validation replacing one-time narrative reviews with ongoing empirical evidence. If you're developing or purchasing carbon credits and want additionality you can defend under scrutiny, we should talk.